An AI-native engineering team is a software development unit that embeds AI agents and LLMs into every phase of the SDLC as primary collaborators, not optional add-ons. Teams operating this model report 25–50% faster delivery cycles, with engineers shifting from writing code to reviewing, steering, and owning outcomes. Kyanon Digital builds AI-native engineering teams for enterprises across Singapore and Southeast Asia using a governance-first Centaur model approach.

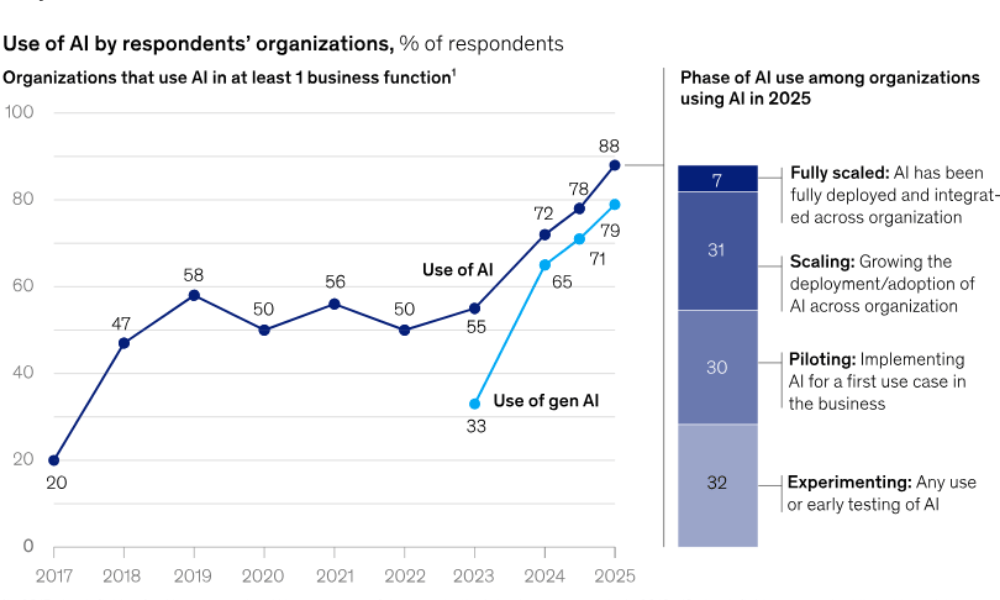

This is becoming a strategic topic because software delivery is shifting from “people using AI tools” to workflows where AI is embedded across design, coding, testing, security, and operations. In McKinsey’s 2025 global survey, 88% of respondents said their organizations regularly use AI in at least one business function, yet only about one-third said AI programs had begun to scale, showing a large gap between experimentation and operational redesign.

That gap matters. Many businesses have added copilots, chat tools, or code generation into existing workflows, but most still have not redesigned delivery around AI.

The issue is no longer whether AI is available. The issue is whether enterprises can build a delivery model that is faster without creating security, quality, and governance debt.

This guide explains what an AI-native engineering team is, how it differs from an AI-assisted team, what the Centaur Model means in practice, how enterprises should evaluate fit, and what to look for in an external partner such as Kyanon Digital.

Key takeaways

- AI-native is an architectural shift, not a tooling upgrade. It redesigns every workflow around AI capability.

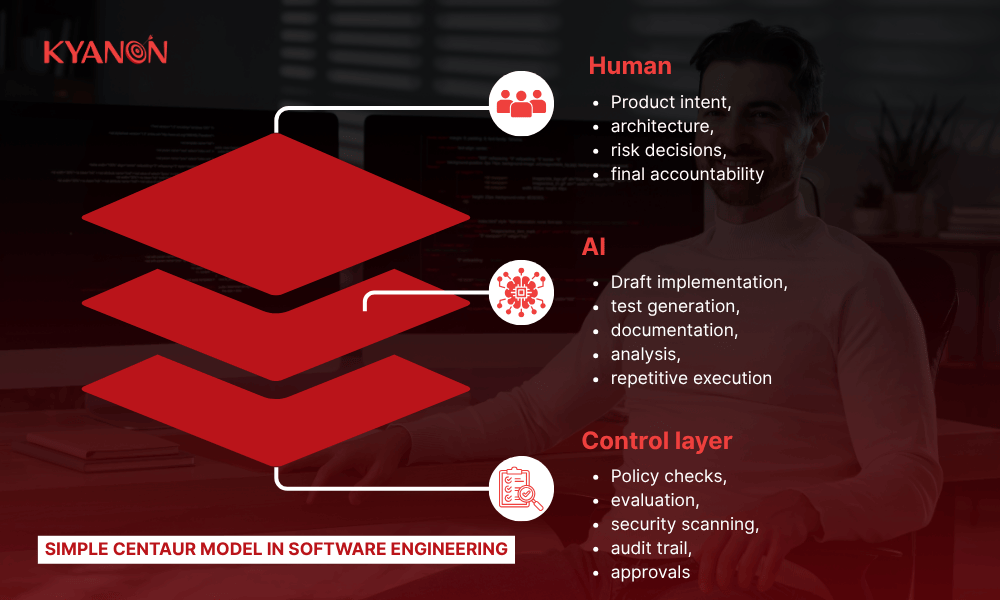

- The Centaur Model defines how these teams operate: AI agents handle execution, and engineers handle judgment, architecture, and outcomes.

- Teams applying AI across the full SDLC report 25–30% productivity gains; teams with governance-first approaches reach 30–50% faster delivery cycles.

- Governance is non-negotiable. All AI-generated code must pass automated security gates before human review.

- AI-native teams are best suited for net-new product builds under time-to-market pressure, not legacy monolith maintenance.

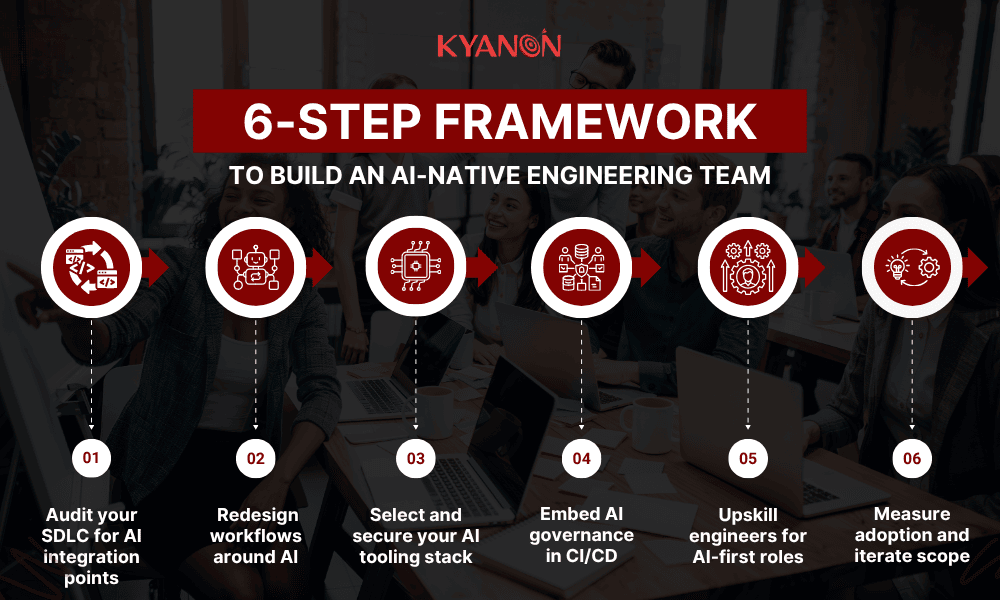

- The 6-step build framework (Audit → Redesign → Tooling → Governance → Upskill → Measure) is the proven path to transition.

Further reading:

- AI And Software Development

- The Future of AI-Native Software in Singapore

- Vietnam’s Advantages for Enterprise AI Outsourcing

- Top GenAI Opportunities for Tech Products by Gartner

The problem with “using AI” vs. being AI-native

Most engineering teams today treat AI as a productivity plugin, a copilot to autocomplete code, or a chatbot to brainstorm solutions.

That is not AI-native. It is AI-assisted.

They often:

- Add an AI assistant for code completion

- Use chat tools for brainstorming

- Generate test cases in isolated tasks

- Keep the same review, release, and governance flow

That can improve local productivity, but it does not create an AI-native system.

What is the difference between an AI-assisted team and an AI-native team?

|

Dimension |

AI-assisted team |

AI-native team |

|

AI role |

Optional productivity layer |

Default execution layer |

|

Workflow design |

Existing SDLC stays mostly the same |

SDLC is redesigned around AI capability |

|

Human role |

Do most work directly |

Set intent, review, approve, and steer |

|

QA and security |

Often applied after generation |

Embedded as default gates throughout |

|

Tooling |

Point tools |

Integrated workflow stack |

|

Scaling potential |

Uneven and person-dependent |

Higher, if governance is strong |

What this means for enterprises

- An AI-assisted team can raise output in pockets.

- An AI-native team aims to change delivery economics at the system level.

- The decision is architectural: workflow design, trust model, and governance decide the outcome more than the model brand or prompt quality.

McKinsey’s 2025 survey is clear that high performers are more likely to redesign workflows, define human validation rules, and track AI-related KPIs.

Transform your ideas into reality with our services. Get started today!

Our team will contact you within 24 hours.

What defines an AI-native engineering team in 2026

What is an AI-native engineering team?

AI-native software engineering embeds AI into every phase of the SDLC, from design to deployment, enabling AI to autonomously or semi-autonomously handle a significant share of tasks across the entire lifecycle. The role of developers shifts from implementation to orchestration.

Four core characteristics of an AI-native engineering team

|

Characteristic |

What it means |

Why it matters |

|

Agents take first-pass ownership |

AI agents scope, draft architecture, and write boilerplate. Engineers review, override, and steer intent. |

Eliminates the bottleneck of developers doing low-value code generation manually. |

|

Engineers shift from doing to reviewing |

Primary value = system judgment, architectural decisions, product strategy, not code generation. |

Engineers focus on problems that require human reasoning; AI handles execution. |

|

End-to-end automation as default |

Unit testing, security checks, and debugging run automatically in CI/CD. Human review is the exception. |

Delivery velocity improves only when the entire pipeline, not just coding, is automated. |

|

Self-healing systems |

Pipelines detect and respond to failures proactively. No manual intervention for routine failures. |

Reduces operational overhead and protects SLOs at scale without growing the ops team. |

1. AI agents take first-pass ownership

AI handles draft tasks first: code scaffolds, test suggestions, issue triage, refactoring proposals, documentation drafts, and operational recommendations.

2. Human value shifts from doing to reviewing

People move toward design judgment, acceptance criteria, exception handling, and business-risk decisions.

3. Automation is the default across the pipeline

Testing, scanning, policy checks, and release validation run continuously. AI-generated output is treated as untrusted until it passes controls.

4. Systems move toward self-healing operations

Detection, root-cause suggestions, rollback recommendations, and remediation playbooks become more automated over time.

The centaur model – How AI-native teams actually work

What is the Centaur Model in software engineering?

The Centaur Model is an AI-native engineering approach where AI handles first-pass work, while engineers guide intent, architecture, and quality control. McKinsey reported in 2025 that leading AI-driven software organizations achieved 16–30% faster time to market and productivity gains, plus 31–45% higher software quality.

Simple operating model

Why the Centaur Model is more realistic than full autonomy

Fully autonomous engineering is still a poor fit for most enterprises.

Reasons:

- Enterprise systems are full of hidden context

- The regulatory and security review still needs explicit controls

- Quality failures are expensive

- AI outputs vary with context quality, tool access, and evaluation rigor

McKinsey’s 2025 survey found that only 23% of respondents said their organizations were scaling an agentic AI system anywhere in the enterprise, while 39% were still experimenting. That suggests most organizations are moving toward human-supervised AI execution, not hands-off autonomy.

How to build an AI-native engineering team in 2026

Building an AI-native team requires six sequential steps: auditing your current SDLC, redesigning workflows around AI capability, selecting a secure tooling stack, embedding governance in CI/CD, upskilling engineers for AI-first roles, and establishing measurement systems to expand AI ownership over time.

Step 1: Audit your SDLC for AI integration points

- Map the entire development pipeline from requirements to deployment.

- Identify which phases remain 100% manual; these are the highest-leverage AI integration opportunities.

- Flag handoff points where AI agents can own first-pass execution.

- Measure current baseline metrics: lead time, defect rate, deployment frequency, and code review turnaround.

Gartner says businesses should not limit AI to coding. It highlights upstream work, such as requirements gathering, user story creation, and ideation, as higher-value opportunities and notes that organizations with more than 50% AI adoption report greater time savings in these early-stage activities.

Step 2: Redesign workflows around AI, not onto existing ones

- Do not automate the old process. Redesign a new process with AI as the default executor.

- Redefine roles: engineers become reviewers and system architects, not code producers.

- Redesign sprint planning, ticket scoping, and code review with the AI execution layer in mind.

Key risk: Teams that layer AI onto broken workflows get faster broken workflows. The workflow architecture is the differentiator, not the AI model.

Step 3: Select and secure your AI tooling stack

AI-native teams require purpose-built tooling across five categories:

|

Category |

Example tools |

Primary function |

|

AI coding agents |

Cursor, GitHub Copilot Enterprise, Amazon Q Developer |

IDE-integrated code generation, review, and refactoring |

|

LLM orchestration |

LangChain, LlamaIndex, custom RAG pipelines |

Connect agents to internal codebases, docs, and context |

|

AI-assisted test generation |

Diffblue Cover, CodiumAI, Testim |

Automated unit, integration, and regression test creation |

|

Security scanning (AI-augmented) |

Semgrep, Snyk, GitHub Advanced Security |

Automated vulnerability detection in AI-generated code |

|

AI-driven observability |

Datadog AI, Dynatrace Davis, Sentry with AI |

Automated anomaly detection, root cause analysis, self-healing |

Establish clear data security boundaries before deployment. AI agents interacting with production codebases require explicit access controls, data residency policies, and IP protection guardrails, especially relevant for enterprises operating under regional regulatory requirements.

Step 4: Embed AI governance in CI/CD

- Treat all AI-generated code as untrusted by default until it passes automated gates.

- Mandatory automated test gates and security scans before any AI-generated code can be merged.

- Implement hallucination checks: verify that imported libraries are legitimate and business logic aligns with product requirements.

- Apply Gartner’s calibrated governance model: human-in-the-loop for high-criticality work; human-on-the-loop for lower-stakes automation.

This is critical because enterprise GenAI use is expanding faster than control maturity. Palo Alto Networks reported GenAI traffic surged more than 890% in 2024, DLP incidents more than doubled, and the average organization used 66 GenAI apps, with 10% classed as high risk.

Step 5: Upskill engineers for AI-first roles

The role transformation required is significant. Engineers must develop new competencies that have no direct precedent in traditional software development:

- Prompt engineering and AI instruction design, directing agents to produce accurate, contextually correct outputs.

- AI output evaluation, identifying hallucinations, architectural misalignments, and logic errors in generated code.

- Agentic workflow management, orchestrating multi-agent systems and managing inter-agent dependencies.

- System verification over code generation: the new primary skill is catching what AI gets wrong, not writing what AI can write.

Anthropic reported that from February to August 2025, the share of engineering tasks using AI for new feature implementation rose from 14.3% to 36.9%, while code design and planning increased from 1.0% to 9.9%, showing that AI is being used for more complex engineering work.

Anthropic also observed that teams were becoming more full-stack in practice, although it did not present this as a quantified performance benchmark.

Step 6: Measure adoption and iterate scope

- Baseline all delivery and quality metrics for one quarter before expanding AI ownership.

- Track: time-per-task, defect capture rate, bug rate, deployment frequency, PR review time.

- Expand AI agent ownership progressively as trust accumulates through validated outcomes.

- Shift performance evaluation from ‘volume of commits’ to defect capture rate and deployment frequency.

Warning: KPMG’s AI Quarterly Pulse Survey found that 85% of leaders said the quality of organizational data is the biggest anticipated challenge to AI strategies in 2025, ahead of privacy, cybersecurity, and adoption. For businesses, that means AI works best when systems are clean, documented, and rich in usable context. Pushing AI into legacy or poorly documented environments too early often reduces consistency and slows real impact.

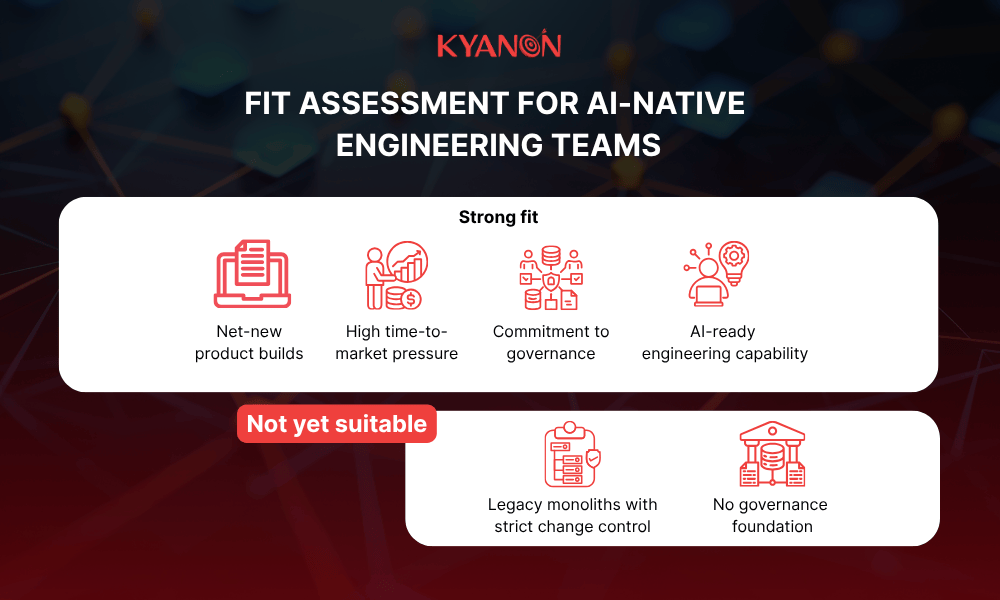

Is an AI-native team right for your organization?

What type of organization benefits most from AI-native engineering?

AI-native teams tend to fit best when a business has the following:

- Net-new digital products to build,

- strong time-to-market pressure,

- frequent iteration cycles,

- enough delivery volume to benefit from automation,

- willingness to invest in governance and enablement,

- a platform mindset rather than one-off experimentation.

Fit assessment for AI-native engineering teams

Usually a good fit

- Product roadmap changes often

- Engineering work includes repeated patterns

- Internal knowledge is documented well enough to ground AI

- Security and release controls can be automated

Usually not a strong fit yet

- Core systems are highly brittle legacy monoliths

- Every change requires heavy manual approval

- The documentation quality is poor

- Enterprise policy does not allow controlled AI access to development workflows

|

Signal |

Implication |

|

Building a net-new product or platform |

High fit, AI-native from the start, is significantly easier than retrofitting |

|

Under pressure to ship faster with the same or smaller team |

High fit, Centaur Model directly addresses this constraint |

|

Operating on a legacy monolith with minimal documentation |

Lower fit, invest in documentation and modularization first |

|

No current automated testing or CI/CD pipeline |

Lower fit, governance infrastructure must be built before AI agent deployment |

|

Willing to restructure engineering roles and upskill teams |

High fit, organizational buy-in is the critical enabler |

How Kyanon Digital builds AI-native engineering teams

Kyanon Digital is a trusted, award-winning technology partner with deep expertise in AI-native engineering, delivering end-to-end digital solutions that drive real business impact for enterprises across Singapore and Southeast Asia.

- Starts with business use cases: defines AI opportunities based on real pain points, ROI, and data availability.

- Designs the right AI architecture: selects suitable models and builds custom RAG-based workflows around enterprise needs.

- Connects and structures enterprise data: ingests content from systems such as SharePoint, Notion, Google Drive, SQL, Dropbox, and Confluence into AI-usable formats.

- Builds with grounded AI workflows: uses retrieval-based approaches so outputs are based on trusted internal content, improving accuracy, transparency, and auditability.

- Deploys into enterprise environments: supports deployment on private cloud, client domain, or private SaaS, with emphasis on avoiding vendor lock-in.

- Combines agility with governance: uses Agile delivery, continuous feedback, and project governance frameworks built around transparency, accountability, and risk control.

- Builds with quality and security controls: highlights ISO 9001 quality management and ISO 27001 security management as part of delivery.

- Trains, monitors, and improves over time: includes onboarding, feedback-driven improvement, and scaling across more teams after deployment.

Case study: How Kyanon Digital built an AI-powered English learning platform with an AI-native engineering team

Kyanon Digital’s AI-powered English learning solution is a strong example of how an AI-native engineering team builds software with AI embedded at the core of the product and delivery model.

The following case study demonstrates Kyanon Digital’s AI-native engineering approach in an EdTech context. The same delivery model and governance framework apply directly to enterprise builds in Singapore and Southeast Asia.

Challenges

- Traditional English learning was often expensive, hard to access, and not personalized, making it difficult for learners to get real-time feedback on pronunciation, grammar, and comprehension.

- The platform also needed to support local payment methods, reduce dropout risk, and scale across a large, underserved learner base in Myanmar.

Solution

- Kyanon Digital built a mobile-first, AI-powered learning platform with speech recognition, NLP-based grammar correction, AI essay scoring, text summarization, adaptive learning paths, and a modular microservices architecture.

- The solution also included gamified learning, a custom CMS, and localized payment integration to improve engagement, operational flexibility, and market fit.

Results: The project launched a feature-complete MVP in 6 months and delivered AI-powered personalization, real-time language feedback, localized payments, and a scalable architecture for future growth.

The case shows how an AI-native engineering approach can turn AI into a built-in product layer for automation, personalization, and scalable digital delivery.

Read more: Building An AI-Powered English Learning Solution With Kyanon Digital

In conclusion

AI-native engineering teams are becoming a real operating model, not just a trend label. The market signal is strong: AI use is broad, workflow redesign is now a major differentiator, and regions such as Singapore are pushing both adoption and capability-building. At the same time, scaled maturity is still limited, which means businesses should choose cautiously and prioritize governance over hype.

For enterprises evaluating an external partner, the right question is not “Do they use AI?” The better question is “Can they deliver an AI-native workflow that improves speed, quality, and control at the same time?”

Evaluating AI-native development partners for your next project? Contact Kyanon Digital!