LLM integration services for enterprise systems in 2026 focus on three things: model-agnostic architecture that prevents vendor lock-in, agentic orchestration across ERP/CRM/HRMS platforms, and execution-time governance that enforces compliance regardless of which model generates the output. Mature integration moves well beyond API connectivity; it separates business logic from the underlying model so systems remain portable and auditable.

Global Market Insights estimates the enterprise LLM market will grow from $8.8 billion in 2025 to $71.1 billion by 2034, at a 26.1% CAGR, while Gartner predicts 40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025. The issue now is no longer whether AI can generate output. It is whether businesses can integrate it into ERP, CRM, HRMS, data, security, and compliance layers without creating a fragile stack that is expensive to govern and difficult to migrate.

That architecture gap is already visible in Singapore. IMDA reports that AI adoption among non-SMEs rose from 44.0% in 2023 to 62.5% in 2024, while SME adoption increased from 4.2% to 14.5% over the same period. Adoption is moving quickly, but scale depends less on access to models and more on data quality, integration depth, runtime controls, and governance discipline.

The operational bottleneck is increasingly clear. MuleSoft’s 2026 Connectivity Benchmark reports that 95% of organizations face integration challenges, reinforcing that the core enterprise AI problem is not access to models but the architecture required to connect them safely into real systems.

In that context, LLM integration becomes an enterprise architecture decision, not a pilot task. This examination covers the architectures, decision frameworks, and risks that determine whether LLM integration becomes a durable capability or an expensive liability.

Key takeaways

- LLM integration is now infrastructure. The market is moving from isolated copilots to embedded, task-specific agents inside enterprise applications.

- Model-agnostic design matters more in 2026. The safest architecture separates business logic, orchestration, and model access so a vendor switch does not break workflows.

- Four layers usually decide success: model routing, orchestration, data and context, and governance. Weakness in any one layer creates production risk.

- Agentic orchestration changes the integration problem. Stateful, role-based agents are replacing one-shot prompts and simple API chaining in higher-value workflows.

- Governance has moved into runtime. Policy checks, audit logs, identity controls, and output controls need to operate while the system runs, not after deployment.

- Regional compliance is diverging. Europe is regulation-heavy; Singapore is assurance-led and pro-innovation; and ANZ is focused on operational resilience. Integration design has to reflect that.

- Framework choice is constraint-driven. There is no universal winner. Existing stack, governance needs, workflow complexity, and portability requirements should drive the choice.

- The wrong integration partner usually fails on architecture, not demos. The critical test is whether they can deliver production orchestration, governance, and clean system integration, not just a pilot.

Further reading

- AI and Machine Learning Development Services In Vietnam

- LLM Development Cost: What Enterprises Budget in 2026

- Top 12+ LLM Development Firms in Singapore 2026

Why 2026 LLM is a different problem than 2023 LLM

In 2023, most LLM work focused on one question: can a model answer well enough to justify a pilot? In 2026, that question is too narrow.

The real issue is whether the system can survive model deprecation, audit requests, rising token costs, agent sprawl, prompt injection, and the complexity of multi-system workflows. That is a very different operating model.

|

Dimension |

2023 | 2026 |

|

Core question |

“Can we connect to an AI API?” |

“How do we scale this without lock-in or audit gaps?” |

|

Deployment pattern |

Single-model API calls |

Multi-agent, multi-model orchestration |

|

Primary concern |

Output quality |

Portability, governance, auditability |

|

Compliance posture |

Post-output review |

Execution-time policy enforcement |

|

Failure mode |

“AI doesn’t work” |

“We’re locked in and can’t migrate” |

| Integration scope | Chatbot or search widget |

Full ERP/CRM/HRMS workflow coverage |

By 2026, 40% of Global 2000 job roles will involve direct interaction or collaboration with AI agents, according to IDC. At the same time, Gartner predicts 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. That is why integration is shifting from pilot work to core enterprise architecture.

Transform your ideas into reality with our services. Get started today!

Our team will contact you within 24 hours.

The core architectural shift: LLM-independent design

What model-agnostic architecture actually means

Model-agnostic architecture means the enterprise system does not treat one model vendor as the application. It treats the model as a replaceable dependency behind stable interfaces, policy controls, and orchestration logic. This is the most important architectural shift in enterprise LLM integration today.

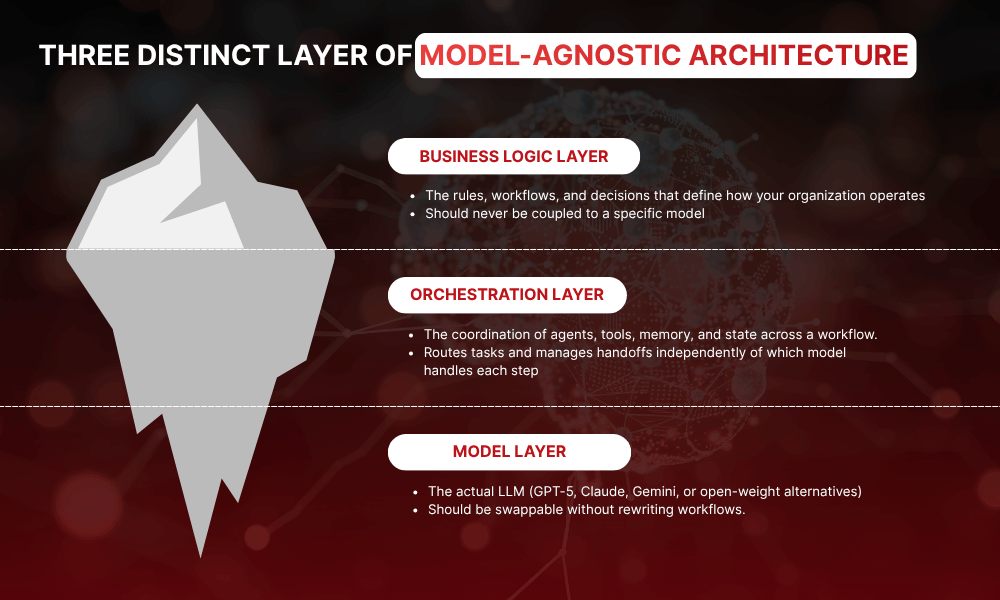

In practice, it means separating three distinct layers so that changes in one do not cascade into the others:

|

Layer |

What it owns |

What should not live here |

|

Business logic layer |

workflows, approvals, domain rules, system actions |

provider-specific prompt hacks |

|

Orchestration layer |

task sequencing, agent handoffs, memory, retries, tool calls |

hard-coded dependency on one frontier model |

|

Model layer |

model access, fallback, routing, provider selection |

enterprise policy logic |

The practical implication: If a business wants to move from one frontier model to another, the migration should affect model access rules, benchmarks, and prompts, not the whole workflow engine. That is the difference between a portable architecture and wrapper code around one SDK.

Why this matters in practice

- Vendor portability: changing model providers should not require rewriting approval logic, ERP connectors, or audit controls.

- Cost control: cheaper models can handle routine tasks, while stronger models are reserved for hard reasoning.

- Resilience: fallback paths can keep services running during provider outages, quota issues, or regional restrictions.

- Compliance: policy enforcement stays stable even when model vendors change.

These are not abstract benefits. Microsoft now positions Azure AI Foundry as an open platform for orchestrating AI apps and agents with multiple models and frameworks, while Vercel describes its AI SDK as provider-agnostic. The market itself is moving toward abstraction because enterprises no longer want application architecture tied to a single model vendor’s release cycle.

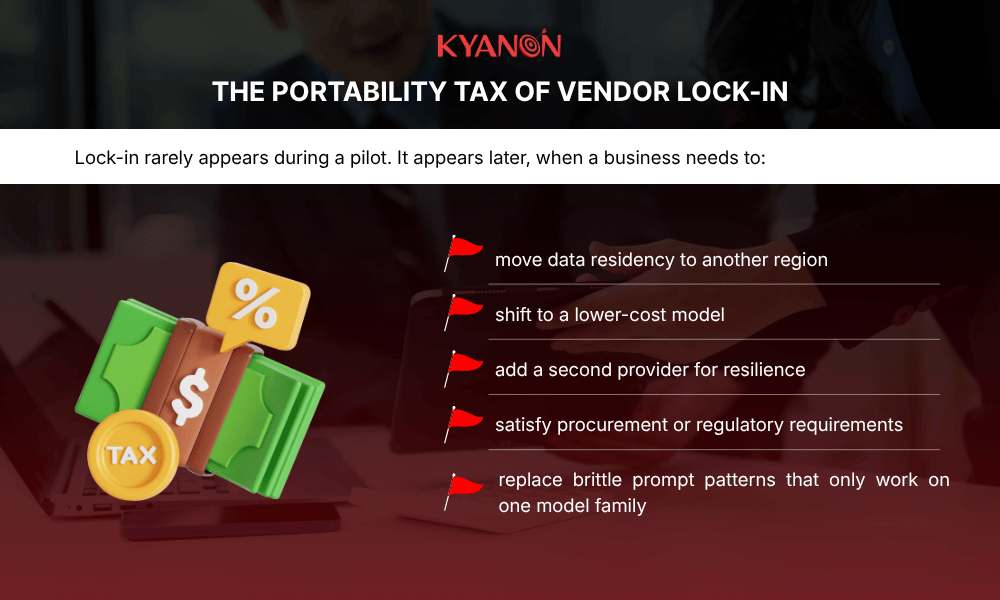

The portability tax of vendor lock-in

According to Menlo Ventures’ December 2025 enterprise AI report, Anthropic accounts for about 40% of enterprise LLM spend, up from 12% in 2023, while OpenAI has fallen to 27% from 50% in 2023.

Competitive leadership is shifting quickly: Artificial Analysis’s 2025 quarterly reports show the frontier lead moving from OpenAI to xAI and back to OpenAI as new model releases change benchmark performance, while Menlo’s enterprise data shows Anthropic retaining a strong edge in coding-heavy workloads.

Enterprises that hard-code to a single provider’s SDK absorb what integration architects call the “portability tax” when the landscape shifts:

- Migration cost: re-engineering workflows, re-formatting data pipelines, re-validating outputs across the new model’s behavior profile.

- Prompt dependency: prompt structures that work well for one model’s tokenization and instruction-following patterns frequently fail when ported to another.

- Timeline asymmetry: the migration happens on the vendor’s deprecation schedule, not yours. There is no negotiation on timing.

What to look for from an integration team

A credible integration team should be able to show that:

- Business logic is separated from model logic

- Orchestration is portable across providers

- Model switching does not require workflow redesign

- Governance controls remain consistent regardless of model choice

That is the real signal of enterprise-grade LLM integration: not fast API connectivity, but an architecture that stays portable, auditable, and maintainable as the model market changes.

Explore more: Transform How Your Business Thinks, Works, and Scales – with Generative AI

The four integration layers every enterprise needs

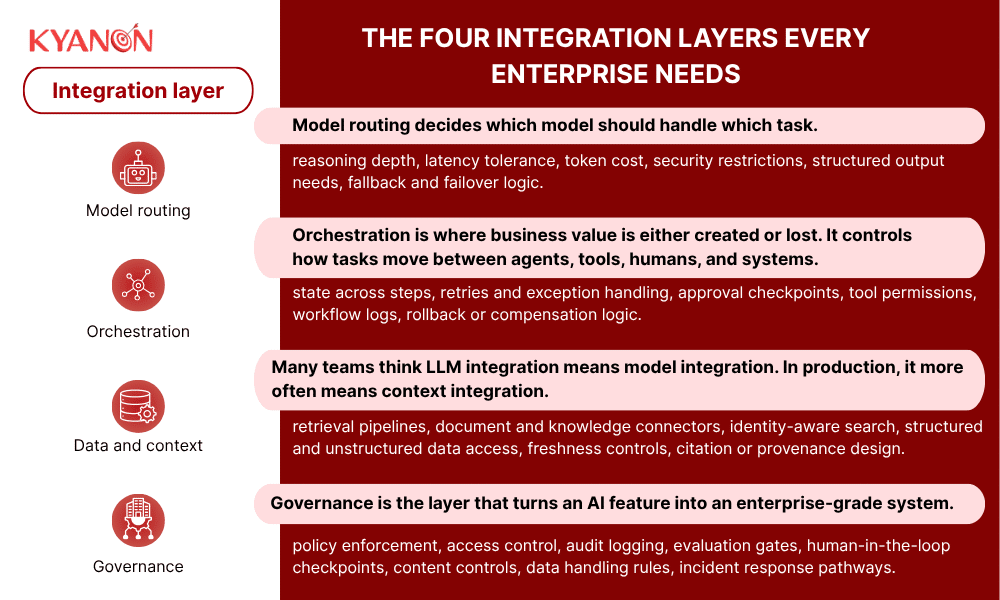

A useful way to assess LLM integration services is to test whether the provider can design all four layers well.

|

Integration layer |

What it does |

Common failure without it |

|

Model routing |

Sends tasks to the right model by cost, speed, or capability |

One expensive model handles everything |

|

Orchestration |

Manages multi-agent workflows, state, tool use, and handoffs across ERP/CRM/HRMS systems |

Agents operate in silos. No end-to-end process coverage. Stateless chains that fail mid-workflow with no recovery. |

|

Data and context (RAG) |

Connects knowledge bases, APIs, search, and real-time retrieval |

Hallucination from stale or missing context. Models confidently provide incorrect information from outdated data |

|

Governance |

Execution-time policy enforcement, audit logging, PII detection, and compliance monitoring. |

EU AI Act exposure, shadow AI risk, no accountability trail. Compliance failures discovered after output, not before. |

This four-layer framing comes directly from the architecture logic in your outline, and it is the clearest way to separate real enterprise integration from simple API connection work.

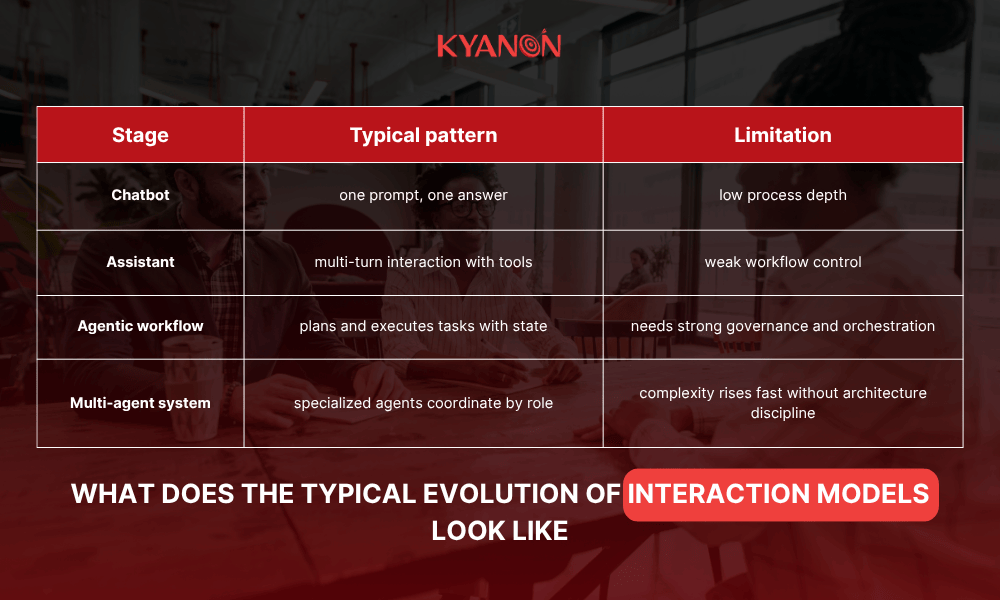

Agentic orchestration: From chatbot to digital employee

The majority of enterprise LLM deployments that worked in 2023 were single-turn: a user submits a prompt, the model returns an answer. In 2026, that interaction model is structurally insufficient for most enterprise use cases.

The evolution of interaction models

- Single-turn: One prompt, one response. Works for simple Q&A and content generation. Does not coordinate across systems.

- Multi-turn: Conversational exchanges with memory of prior context. Better UX, but still reactive and user-driven.

- Agentic: The agent plans, executes a sequence of actions, monitors outcomes, and adapts across multiple systems, without a human driving each step.

LangGraph’s workflow and agent documentation reflect this shift clearly: workflows are structured and predictable, while agents are dynamic and tool-using. That distinction matters because enterprise systems need both. They need flexibility, but they also need bounded behavior.

Role-based AI: Defined scope, not a single god-agent

Mature agentic architectures do not deploy one AI agent to handle everything. They define agents by role and scope, each with specific permissions, access controls, and handoff protocols:

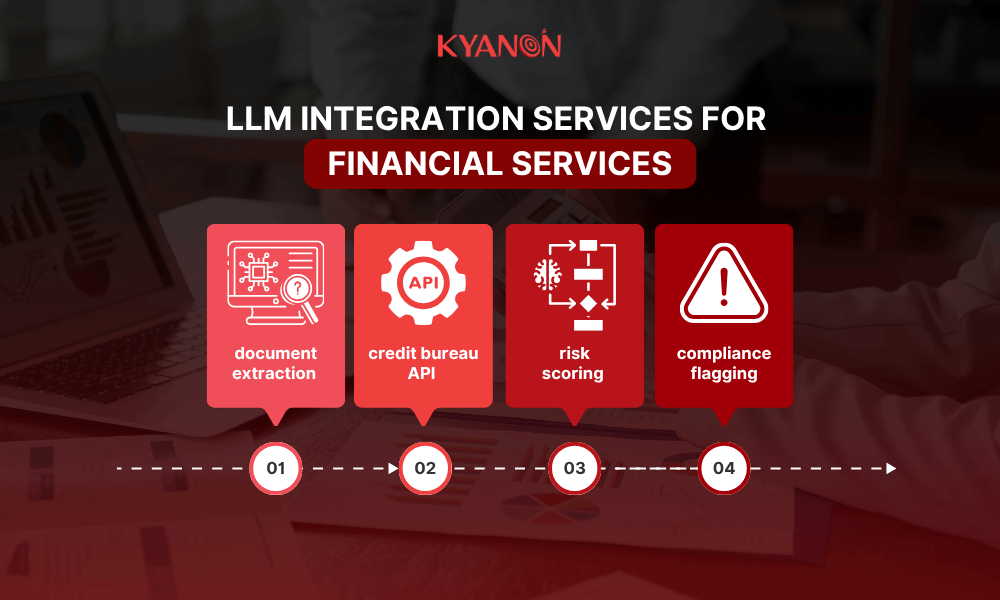

- A loan processing agent that handles document extraction → credit bureau API → risk scoring → compliance flagging. Speed and auditability are both non-negotiable.

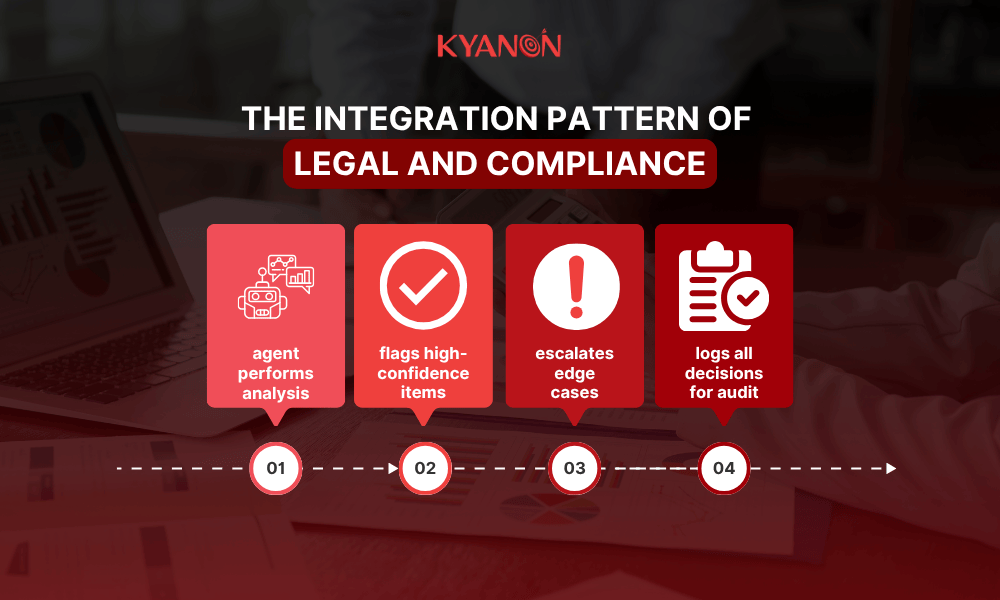

- A contract review agent that performs cross-jurisdiction diff analysis, routes exceptions for human-in-the-loop review, and logs every decision.

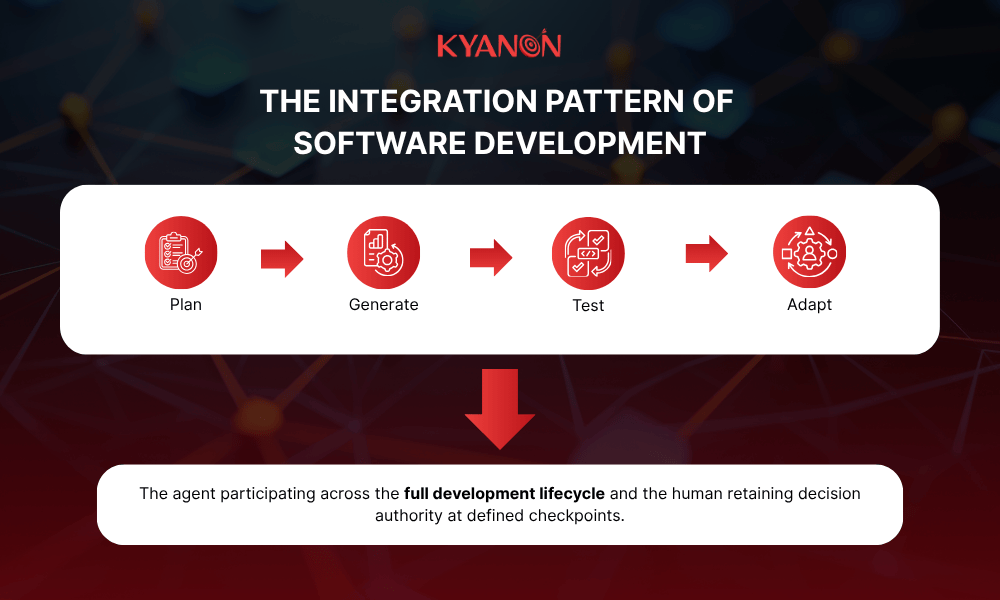

- A software development agent that participates in the full development lifecycle: plan → generate → test → adapt. Not code completion, workflow participation.

Why state matters

Stateless API chaining works for demos. It breaks more easily in production because it struggles with:

- long-running tasks,

- multi-step approvals,

- recovery after failure,

- memory across handoffs,

- reliable audit reconstruction.

Stateful orchestration is what lets a system resume work, explain what happened, and prove which tool or agent took which action.

A concrete pattern

A loan-processing workflow is a useful mental model:

- Extract data from submitted documents,

- verify fields against internal systems,

- call external bureau or risk services,

- generate a recommendation,

- flag exceptions for human review,

- store logs and evidence trails.

That is not a chatbot. It is an orchestrated operational process with AI components inside it.

Execution-time governance: Compliance is not a post-processing step

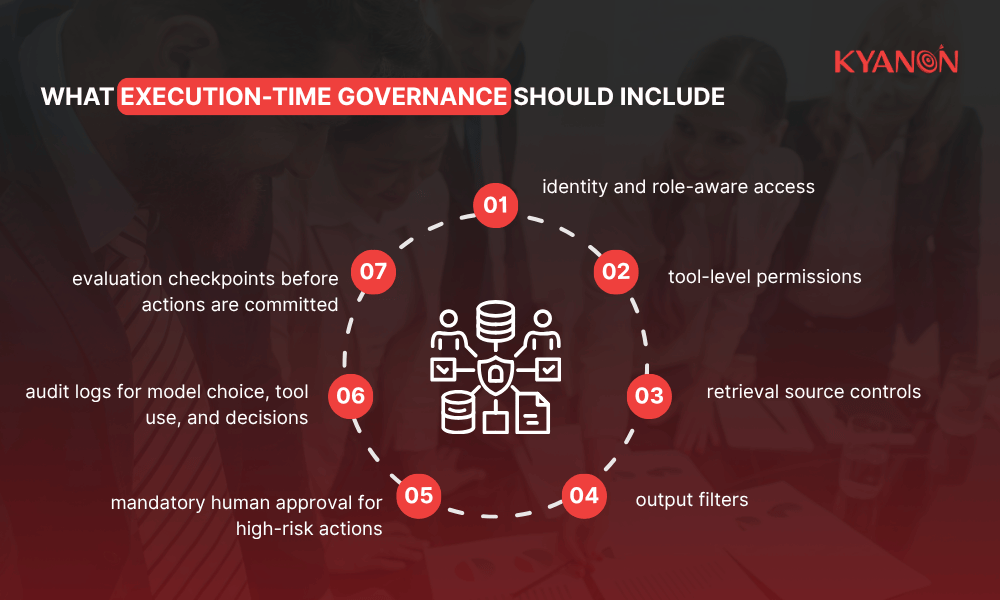

Execution-time governance means policies are enforced while the system runs, not only after output appears. This is the right frame for enterprise LLM systems because most real risk sits in tool use, data access, agent permissions, and workflow actions, not only in raw text generation.

What execution-time governance means in practice

Execution-time governance means policies are enforced while the system runs:

- PII detection and blocking before data enters the model’s context window.

- Output validation against defined compliance rules before results are returned to downstream systems.

- Audit logging at each decision point, not as a post-process batch job.

- Model-independent enforcement: the governance layer applies the same policies regardless of which model or provider handles a given task.

EU AI Act and NIST AI RMF: What integration architecture must be supported?

The EU AI Act introduced high-risk AI classification triggers that apply directly to enterprise LLM deployments in HR, financial services, legal decision support, and customer-facing systems. Non-compliance is not a future concern; enforcement applies to systems deployed now.

- High-risk system classification requires conformity assessment, transparency obligations, and human oversight mechanisms built into the architecture, not added later.

- NIST AI RMF alignment requires integration architecture that supports risk measurement, ongoing monitoring, and documented accountability across the full model lifecycle.

- The governance layer must be model-independent by design; compliance posture cannot depend on which model happens to be active at a given moment.

Regional differences that matter

|

Region |

What is changing |

Integration implication |

|

EU |

AI Act obligations are phasing in through 2025–2027 |

stronger documentation, governance, and risk classification design |

|

Singapore |

AI Verify ecosystem and assurance efforts are expanding; MAS proposed AI risk guidelines for finance |

more emphasis on testing, assurance, evidence, and controlled deployment |

|

ANZ |

APRA CPS 230 is in force |

stronger third-party resilience, operational controls, and service-provider oversight |

Europe is pushing for a formal regulatory structure faster. Singapore is more assurance-driven and pragmatic, using testing frameworks and sector guidance to raise standards. ANZ is pushing operational resilience and third-party control harder. A single integration pattern will not satisfy all three equally well.

Shadow AI: The new compliance risk

Shadow AI, non-technical users building production workflows using consumer AI tools without IT oversight, has emerged as a more significant compliance risk than unauthorized SaaS adoption.

The reason is structural: shadow SaaS generates data exposure. Shadow AI generates production artifacts: code, workflows, and automated decisions that enter business operations without audit trails, validation, or governance coverage.

Addressing shadow AI is not a policy problem. It is an architecture problem: enterprises need governed, accessible AI workflows that are easier for non-technical users to use safely than the consumer alternatives they would otherwise reach for.

Integration framework selection: How to choose

Framework selection is not about which tool is most popular. It is about which tool fits the existing stack, compliance requirements, and long-term model portability goals. The table below provides a decision lens, not a ranking.

|

If your main constraint is… |

Consider… |

Why |

|

Microsoft stack already dominates |

Azure AI Foundry / Microsoft Agent Framework |

strong ecosystem fit, enterprise governance, broad model and SDK support |

|

API governance at scale |

Kong AI Gateway |

centralized routing, security, observability, and cost controls |

|

Complex stateful multi-agent workflows |

LangGraph |

built for long-running, stateful workflows and agents |

|

Frontend-heavy product teams and fast developer experience |

Vercel AI SDK |

provider-agnostic toolkit with strong web app ergonomics |

|

True LLM independence above all else |

custom abstraction layer or model-agnostic framework stack |

portability takes priority over convenience |

Note: Kyanon Digital evaluates framework fit based on existing stack, compliance requirements, and long-term model portability, not framework popularity. The right framework is the one that constrains your options least over a three-to-five-year horizon.

- Microsoft is strengthening unified governance and model choice in Foundry.

- Kong is positioning the gateway as the control plane for AI traffic.

- LangGraph is becoming a default reference for stateful agent workflows.

- Vercel is optimizing for product teams that need fast application delivery with provider flexibility.

Selection rules that prevent bad decisions

- Do not choose a framework only because it is popular.

- Do not optimize for demo speed if governance is the real blocker.

- Do not over-engineer a portability layer if the business is fully committed to one cloud and one region.

- Do not under-engineer abstraction if procurement, residency, or resilience may force a provider change within 12–24 months.

Industry-specific integration patterns

Integration architecture is not generic. The patterns that matter most differ meaningfully by vertical. Below are the integration use cases with the highest production adoption and the clearest architecture requirements.

Financial services

A common pattern is document extraction, bureau or core-system lookup, risk analysis, exception handling, and compliance flagging in one orchestrated flow. The integration priority is not only speed. It is explainability, auditability, resilience, and control over service-provider dependencies.

That is where MAS, APRA, and EU requirements all push design discipline higher.

Key requirements: execution-time governance, human-in-the-loop at exception points, full audit logging per regulatory obligation (MAS TRM for Singapore, APRA for ANZ, FCA for UK).

Legal & compliance

Typical use cases include contract summarization, obligation extraction, clause comparison, and cross-jurisdiction review support. These can deliver real productivity gains, but they usually need stronger human review and provenance because the cost of a wrong answer is high.

The governance layer is not optional in legal workflows. It is the primary deliverable.

Software development

The market is moving from code completion toward AI systems that plan, generate, test, debug, and adapt across the development lifecycle. Microsoft’s 2025 Build messaging around the “open agentic web” and the growth of workflow-centric tools show that engineering workflows are becoming one of the main proving grounds for agentic integration.

Retail & e-commerce

The integration pattern is often less about chat and more about personalization, catalog enrichment, product discovery, merchandising support, dynamic pricing inputs, and service workflow automation. These use cases depend heavily on connected data and strong guardrails because bad recommendations can affect revenue, margin, and customer trust.

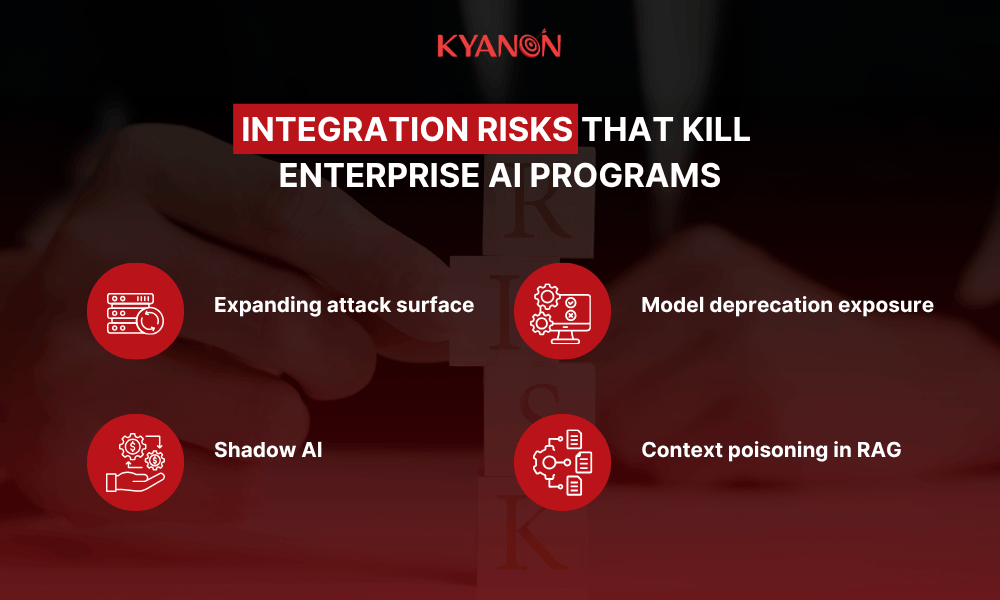

Integration risks that kill enterprise AI programs

These risks should be treated as design decisions, not reasons to stop.

Expanding attack surface

OWASP’s GenAI security project places prompt injection at the top of the 2025 LLM risk set. In practical terms, that means user input, retrieved documents, web content, and connected tools can all become attack paths if the system trusts them too much.

Architectural response: isolate tool permissions, treat external content as untrusted, validate retrieval sources, and keep sensitive actions behind approval or policy gates.

Shadow AI

Shadow AI is more dangerous than ordinary shadow SaaS because it can generate code, workflows, decisions, and operational artifacts without approved controls. The more low-code and no-code agent tools spread, the more this becomes a governance problem, not only a procurement problem. MAS’s proposed AI risk guidelines and Singapore’s assurance direction both point toward stronger control expectations here.

Architectural response: central gateways, identity controls, approved tool registries, and mandatory logging.

Model deprecation exposure

If the entire workflow is built around one provider’s API semantics, the enterprise is forced to migrate on the vendor’s timeline, not its own.

Architectural response: abstraction at the model layer, standardized output contracts, benchmark suites, and controlled fallbacks.

Context poisoning in RAG

Poor or poisoned retrieval pipelines can make even a strong model produce bad outputs. This is not mainly a model problem. It is a retrieval, provenance, and governance problem.

NIST’s GenAI profile and OWASP’s 2025 LLM risk framing both reinforce that system-level controls matter.

Architectural response: source validation, freshness rules, chunking discipline, access-aware retrieval, and provenance tagging.

What to look for in an LLM integration partner

When evaluating a team, the critical question is not whether they can build a chatbot. It is whether they can design an integration architecture that survives production reality.

Core checklist

- Builds model-agnostic architecture by default: A strong team should talk about abstraction, routing, fallback, and portability early.

- Has delivered production agentic workflows: Not just prompt demos. Look for evidence of state, tool use, workflow recovery, and operational monitoring.

- Treats governance as a deliverable: Audit logging, policy enforcement, access control, evaluation, and human review should be in scope.

- Understands your compliance environment: EU AI Act, MAS expectations, APRA operational resilience, and sector-specific controls should shape the design.

- Can integrate with your real stack: ERP, CRM, HRMS, internal APIs, document systems, identity, and security tooling matter more than a polished prototype.

- Has a clear IP and data-handling posture: Ownership, retention, provider boundaries, and testing environments should be explicit.

These criteria come directly from the evaluation lens in your outline and are the right way to separate system integrators from demo builders.

Questions worth asking in vendor meetings

|

Question |

Why it matters |

|

How do you prevent provider lock-in? |

tests architectural maturity |

|

Where do policy checks run? |

reveals whether governance is real or cosmetic |

|

How do you handle state and recovery in multi-step workflows? |

tests production readiness |

|

How do you validate retrieval quality and source trust? |

tests RAG discipline |

|

What logs are available for audits and incident review? |

tests compliance readiness |

|

How do you partition model, orchestration, and business logic? |

tests portability and maintainability |

|

How do you handle region-specific requirements? |

tests ability to work across EU, Singapore, and ANZ contexts |

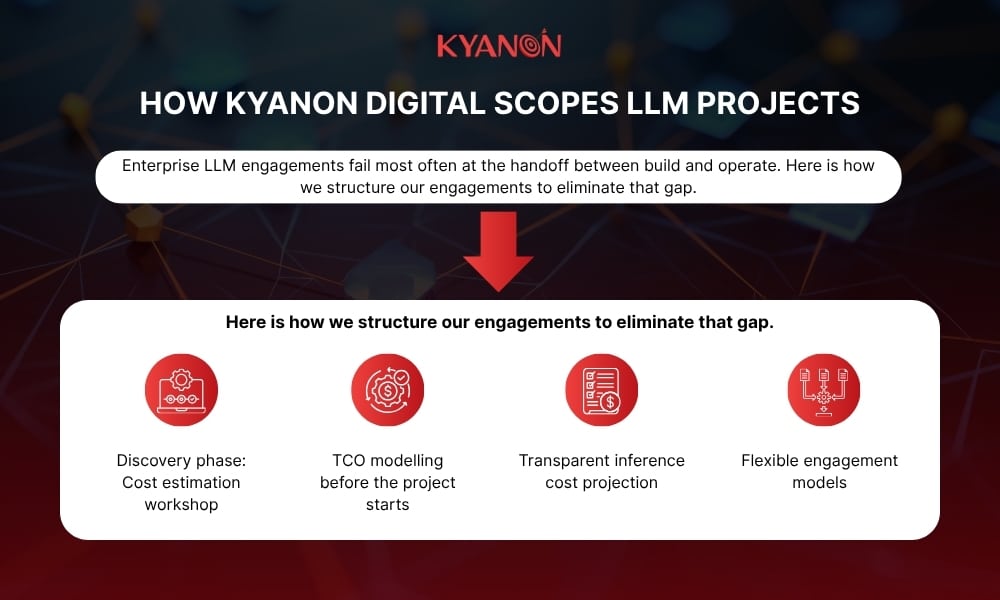

Why choose Kyanon Digital as your partner?

Kyanon Digital delivers enterprise AI integration capabilities through modular architecture, API-led system connectivity, flexible cloud or on-premises deployment, and governance controls that support scalable, production-ready operations.

- Integration-first delivery: Connects ERP, CRM, cloud platforms, legacy systems, third-party tools, and data environments into one operating flow.

- Architecture built for change: Uses composable architecture, middleware, and API management so systems can scale, extend, or change without full redesign.

- Lower lock-in exposure: Supports modular components, standardized integration patterns, and flexible deployment across client-controlled environments.

- Governance built into delivery: Includes security, monitoring, observability, access control, encryption, and auditability as part of the implementation model.

- Applied AI implementation: Has experience with RAG-based systems and multi-model environments where portability, retrieval quality, and control matter.

- Capacity for larger programs: Brings the scale, engineering depth, and delivery structure needed for complex multi-system transformation.

- Fit for production environments: The more relevant test is whether the delivery model supports integration depth, architecture flexibility, governance discipline, and production rollout.

Case study: Building an AI-powered legaltech platform with LLM development

Client’s challenge

A leading law firm sought to automate document review and legal research processes while maintaining data security and accuracy. Traditional keyword-based search systems were slow, inconsistent, and unable to understand legal context, leading to wasted time and missed insights.

Kyanon Digital’s solution

As the client’s LLM development company, Kyanon Digital built an AI-powered LegalTech platform that leveraged fine-tuned Large Language Models (LLMs) to enhance legal document understanding and automation. The solution included:

- Developing a domain-specific LLM optimized for legal terminology and Vietnamese-English bilingual data.

- Implementing secure, on-premise deployment, ensuring client confidentiality.

- Integrating intelligent document search, summarization, and case law analysis features.

Results & impact

- Reduced legal research time by over 60%, improving operational efficiency.

- Delivered accurate, context-aware responses with enhanced data protection.

- Demonstrated how LLM development can drive real-world transformation in the legal sector.

Read the full case study: Building an AI-powered Legaltech Platform with Kyanon Digital

In conclusion

The enterprise LLM integration problem in 2026 is an architecture problem, not a model problem. The organizations that are scaling AI successfully are not the ones that chose the best model; they are the ones that built systems designed to survive model deprecations, comply with governance requirements, and extend across enterprise workflows without creating vendor dependencies they cannot exit.

Ready to build LLM integration that can scale in production?

Kyanon Digital helps enterprises design and implement model-agnostic LLM integration architectures, with governance, agentic orchestration, and compliance considered from day one. Contact us to explore the right approach for your systems!

Create project brief with AI

Create project brief with AI